2015-01-20 23:47:22.596 Chatter[52212:595641] WHO: Pronoun

2015-01-20 23:47:22.596 Chatter[52212:595641] WROTE: Verb

2015-01-20 23:47:22.596 Chatter[52212:595641] THE: Determiner

2015-01-20 23:47:22.596 Chatter[52212:595641] DECLARATION: Noun

2015-01-20 23:47:22.597 Chatter[52212:595641] OF: Preposition

2015-01-20 23:47:22.597 Chatter[52212:595641] INDEPENDENCE: Noun

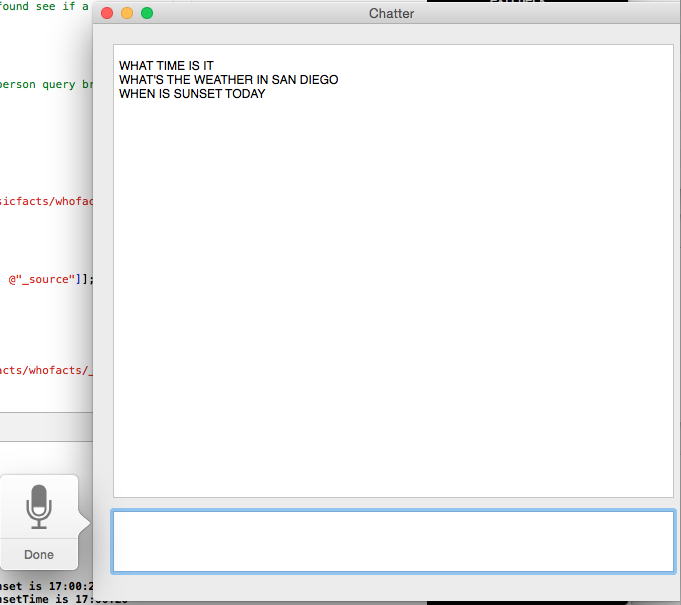

Granted thats not how I dealt with the above query. I figured using a knowledge system approach would be best for a query engine. So I have a collection of facts dumped in one path of es:

Thomas Jefferson wrote the Declaration of Independence

George Washington was the first President of the United States

Adolf Hilter was the leader of the nazi party

Robert Oppenheimer was the father of the atomic bomb

Albert Einstein developed the theory of relativity

Bill Clinton was the 42nd President of the United States

... and so on.

Given any who question, we can then chop the "who" and search the rest:

"who developed the theory of relativity?" --> becomes an es query on "developed the theory of relativity" --> becomes the response "Albert Einstein developed the theory of relativity." There are some similar tricks for the "where" and "when". The "how" is a bit tougher. And these are of course simple tricks, not conclusive or exhaustive. The good news is that knowledge representation is a fairly well known area, and given the internet and google, wikipedia, and wolfram alpha, we have access to a very nice data source.

The personalized recognition was more for things like location, interests, calendar, birthdays, contact information, identifying relationships, etc. The idea being that as you interact with the system, it should "remember" information about you, your relationships, and more. At some point the system should know that I have a child (if I've mentioned it) and even one day spontaneously inquire as to the child's well being. Still working on this... ;-)

The last part is the "fall back". AIML chat bots have gone a pretty long way at simulating basic conversation skills. If the other systems didn't respond with anything it would be up to an AIML base chat bot to reply.

The response is fed to NSSpeechSynthesizer, which we act as a delegate for. When we receive the notification didFinishSpeaking then we know that we can re-enable the recognizer for the next round.

Alas other projects and interests have caught me and this is really just me taking notes while this gets pushed to the back burner. So until next time... adieu.